Supersedence was supposedly a great new feature for ConfigMgr 2012 (released in… you guessed it..) that was part of the Application Model. App-model held so many promises, however it was actually quite broken until a few service packs and fixes down the road. There are still quite a few defects that just are “known” by people and adds to the general feeling of ConfigMgr beeing complex.

Supersedence was supposedly a great new feature for ConfigMgr 2012 (released in… you guessed it..) that was part of the Application Model. App-model held so many promises, however it was actually quite broken until a few service packs and fixes down the road. There are still quite a few defects that just are “known” by people and adds to the general feeling of ConfigMgr beeing complex.

After a twitter-rant on how Microsoft view their support organisation (and anyone paying / using it) as a third-class citizien with no rights – apart from beeing the therapist for whatever sysadmin is on the other end of the call – I became sort of frustrated that the sixth most top voted Uservoice item, something that I previously raised support calls within their organisation aswell as spent better part of a week (at Microsoft Seattle campus) ranting about this specific defect to just about anyone who potentially could reach the actual Product-Caretaker to fix the issue. Microsoft may spring out shiny new things – but it honestly sucks at fixing its products. If its broken – it’s still just plain broken. And even when its fixed – noone tells you its fixed apart from during watercooler talk.

How long has supersedence been broken? Since 2012

Update 2017-12-18:

ConfigMgr TechPreview 1712 was released with a specific fix that would potentially aim to resolve test 1 and 2. If you are on a support agreement it seems that potentially you could drop it and only relay requests via UserVoice.

What is the scenario?

So, a few things to get the setup of what the actual defect is. Supersedence is the intent to connect two Applications within ConfigMgr and inform the system how it should replace the older one/ones. For the defect to show itself the following has to be inplace;

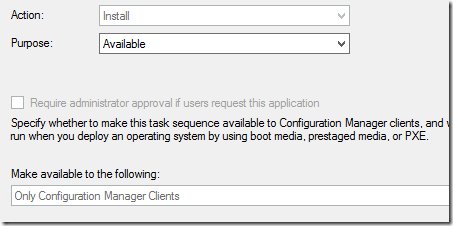

- Application has to be deployed (directly / indirectly) as Available

- Application can be targeted to users / computers

- Application made Available has to replace a previous old version that is installed on the given impacted client

FoxDeploy (Stephen Owen) wrote a great article that sets up the premise for the defect, however as far as I understand his target environment deals only with users (or user collection targeted deployments). Apart from really sharing a few thoughts, creating a very popular UserVoice-item there are somethings that needs to be retested (previous extensive confirmation of this defect was in the ConfigMgr 2012 era – both for myself and FoxDeploy) with the newer versions of ConfigMgr.

There is no release statement from Microsoft that this has been altered, fixed or improved upon as far as the eye can see.

Based on the above we can also conclude that this is not relevant if;

- All deployments are Required

- Supersedence is not used

- Older versions, now beeing replaced, are not installed on any endpoints

Tests

We will retry the following tests on a ConfigMgr 1702 site. Neither 1706 and 1710 has introduced anything remotely closing to fixing this behaviour and all rumours that people have heard about previous fixes have been pre-CurrentBranch.

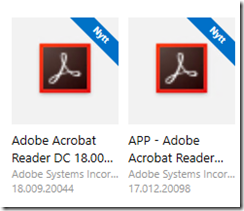

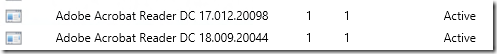

Applications used while testing

Test 1

- Application (Adobe Reader DC 18) will supersed an older version (Adobe Reader DC 17) before creating the deployment

- A deployment will be created that targets our specific computer that has Adobe Reader DC 17 installed

- Deployment will target a computer collection

- Deployment will be set to be made available

Result

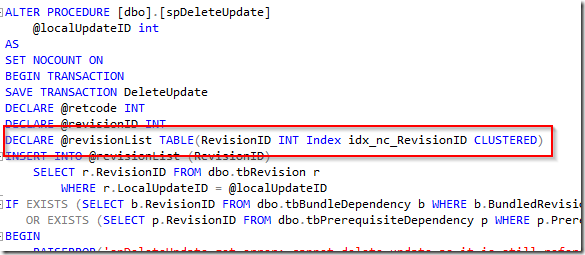

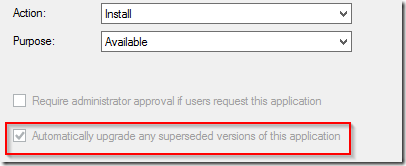

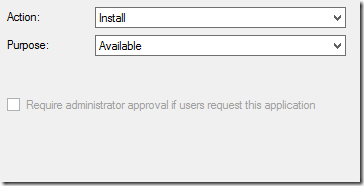

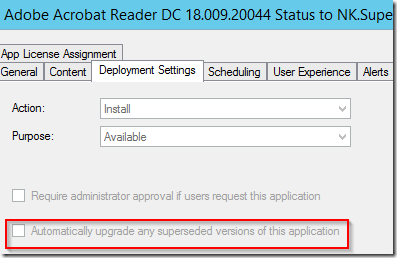

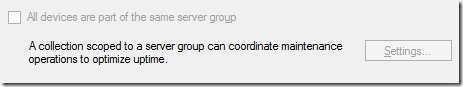

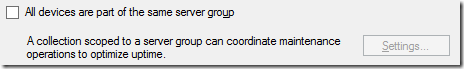

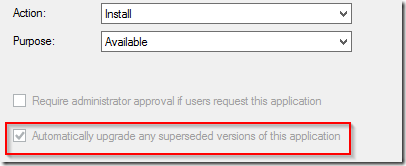

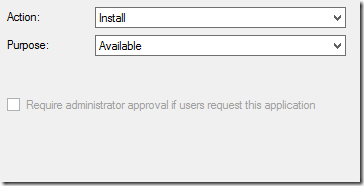

When creating the deployment the following new check-boxes will become painfully obvious for the administrator. Pay attention, and ConfigMgr will tell you exactly how you are about to wreak havoc within the environment. Despite that you are saying – please just offer this as a nice-to-have-thingy – there is a greyed out check-box that indicates that a required deployment is technically beeing created.

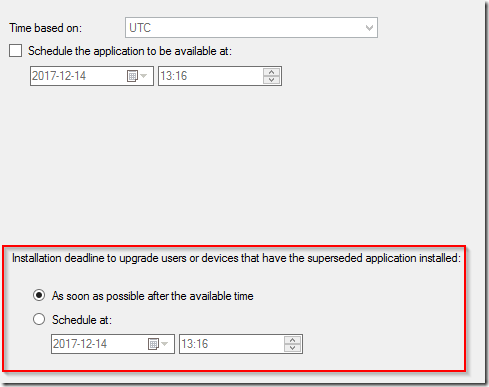

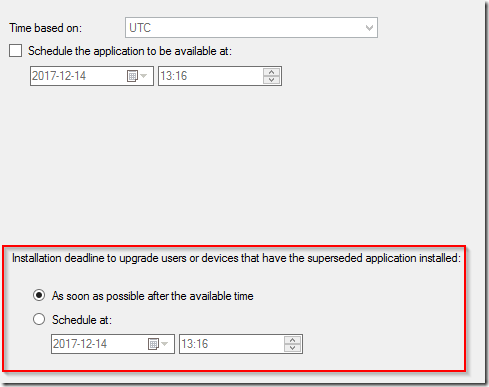

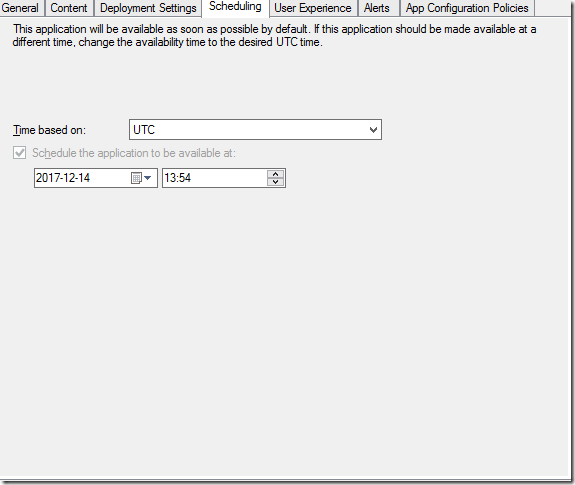

When setting the date/time the deployment will be available – this becomes even more painfully obvious that you are infact creating a required deployment

As you might have guessed – as soon as the client receives this new policy – Adobe Acrobat Reader DC 17 is upgraded to Adobe Acrobat Reader DC 18.

Consistent with ConfigMgr 2012 in 2012

Test 2

- Application (Adobe Reader DC 18) will supersed an older version (Adobe

Reader DC 17) after creating the deployment

- A deployment will be created that targets our specific computer that

has Adobe Reader DC 17 installed

- Deployment will target a computer collection

- Deployment will be set to be made available

Result

As you can imagine the wizard while creating the deployment as Available does not present any information regarding supersedence (as that relationship between the two applications does not exist yet)

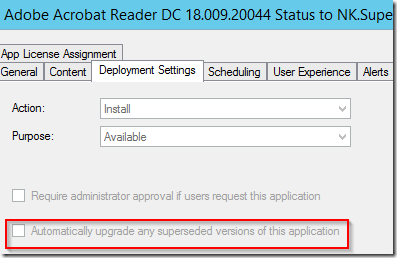

After creating the supersedence relationship and then opening the properties for the specific Available deployment the following reveals itself. Same as when Foxdeploy concluded the testing early 2016.

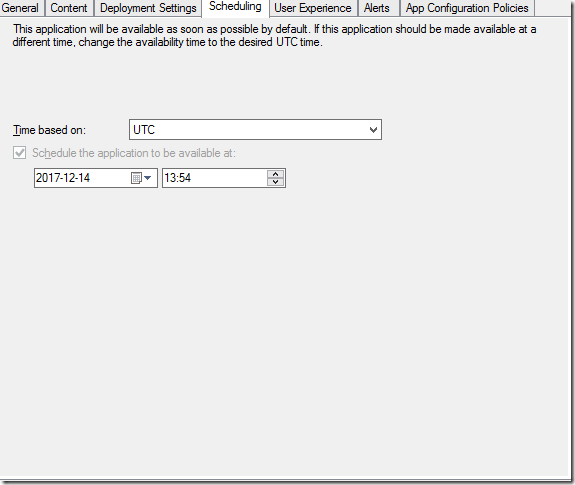

The scheduling leaves no schedule set for when supersedence should be run.

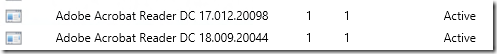

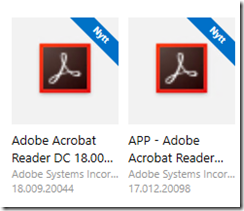

For a brief moment on the client the two versions will reveal themselves

Unlike Foxdeploys testing (that were targeted for user collections) – the upgrade will still occur and now its running on the schedule that you can not control. At the latest of the next Application Evaluation cycle – the upgrade will take place.

Consistent with ConfigMgr 2012 in 2012

Test 3

- Application (Adobe Reader DC 18) will supersed an older version (Adobe

Reader DC 17) before deployment is created

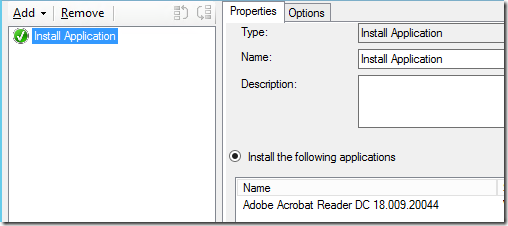

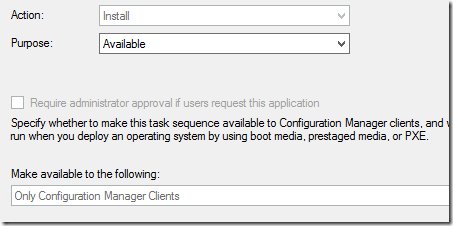

- The application themselves will not be deployed, but a Task Sequence containing one step installing Adobe Reader DC 18 will be made available.

- A deployment (for the task sequence) will be created that targets our specific computer that

has Adobe Reader DC 17 installed

- Deployment will target a computer collection

- Deployment will be set to be made available

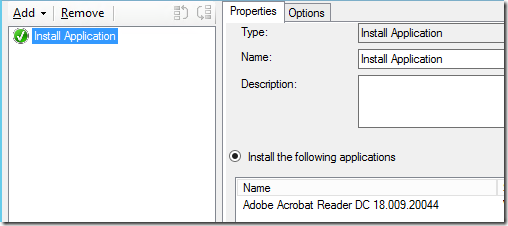

Contents of Task Sequence

Result

As the task sequence only contains the step to install the one specific application there is no possibility to select that this would be available for PXE-only or other options than the ConfigMgr-client. The Task Sequence is still set to only be available. Per my experience it is also recommended to set the Available time back 1h (or 1 day) to get fairly instant results, otherwise you need to wait a bit before the client start processing the information.

As per the hallway chatter this seems to be resolved and the Adobe Acrobat Reader DC 18 does not cause an automatic upgrade.

Not consistent with ConfigMgr 2012 in 2012

Workaround

What have we learnt? Well – anything that depends on supersedence will have a required deployment instead of an available deployment. There are of course three options to deal with this and they are as follows

- Script it. Powershell Application Deployment Toolkit offers great ways todo this with control.

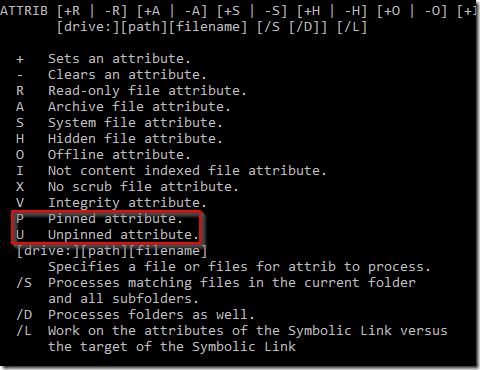

- Set up the supersedence relationship, and then create the available deployment and alter the time for the supersedence from ASAP to a date far far into the future. Perhaps so far that you will not be handling the site any more.

- Follow FoxDeploys guidance for user deployments and create the deployment, and then the supersedence relationship.